AI Labor Is Boring. AI Lust Is Big Business

After years of hype about generative AI increasing productivity and making lives easier, 2025 was the year erotic chatbots defined AI’s narrative.

After years of hype about generative AI increasing productivity and making lives easier, 2025 was the year erotic chatbots defined AI’s narrative.

Cloudflare has open sourced tokio-quiche, an asynchronous QUIC and HTTP/3 Rust library that wraps its battle tested quiche implementation with the Tokio runtime. The library has been refined inside production systems such as Apple iCloud Private Relay, next generation Oxy based proxies and WARP’s MASQUE client, where it handles millions of HTTP/3 requests per second with low latency and high throughput. tokio-quiche targets Rust teams that want QUIC and HTTP/3 without writing their own UDP and event loop integration code.

quiche is Cloudflare’s open source QUIC and HTTP/3 implementation written in Rust and designed as a low level, sans-io library. It implements the QUIC transport state machine, including connection establishment, flow control and stream multiplexing, while making no assumptions about how applications perform IO. To use quiche directly, integrators must open UDP sockets, send and receive datagrams, manage timers and feed all packet data into quiche in the correct order. This design gives flexibility, but it makes integration error prone and time consuming.

tokio-quiche packages this integration work into a reusable crate. It combines the sans-io QUIC or HTTP/3 implementation from quiche with the Tokio async runtime, and exposes an API that already manages UDP sockets, packet routing and calls into the quiche state machine.

Internally, tokio-quiche uses an actor model on top of Tokio. Actors are small tasks with local state that communicate through message passing over channels, which aligns well with sans-io protocol implementations that own internal state and operate on message like buffers.

The primary actor is the IO loop actor, which moves packets between quiche and the UDP socket. One of the key message types is an Incoming struct that describes received UDP packets. Async integration follows a fixed pattern, the IO loop awaits new messages, translates them into inputs for quiche, advances the QUIC state machine, then translates outputs into outbound packets that are written back to the socket.

For each UDP socket, tokio-quiche spawns two important tasks. InboundPacketRouter owns the receiving half of the socket and routes inbound datagrams by destination connection ID to per connection channels. IoWorker is the per connection IO loop and drives a single quiche Connection, interleaving calls to quiche with calls to application specific logic implemented through ApplicationOverQuic. This design encapsulates connection state inside each actor and keeps QUIC processing isolated from higher level protocol code.

QUIC is a transport protocol and can carry multiple application protocols. HTTP/3, DNS over QUIC and Media over QUIC are examples covered by IETF specifications. To avoid coupling tokio-quiche to a single protocol, Cloudflare team exposes an ApplicationOverQuic trait. The trait abstracts over quiche methods and the underlying IO, and presents higher level events and hooks to the application that implements the protocol. For example, the HTTP/3 debug and test client h3i uses a non HTTP/3 implementation of ApplicationOverQuic.

On top of this trait, tokio-quiche ships a dedicated HTTP/3 focused implementation named H3Driver. H3Driver connects quiche’s HTTP/3 module to the IO loop actor and converts raw HTTP/3 events into higher level events with asynchronous body streams that are convenient for application code. H3Driver is generic and exposes ServerH3Driver and ClientH3Driver variants that add server side and client side behavior on top of the core driver. These components provide the building blocks for HTTP/3 servers and clients that share implementation patterns with Cloudflare’s internal infrastructure.

tokio-quiche has been used for several years inside Cloudflare before its public release. It powers Proxy B in Apple iCloud Private Relay, Oxy based HTTP/3 servers and the WARP MASQUE client, as well as the async version of h3i. In the WARP client, MASQUE based tunnels built on tokio-quiche replace earlier WireGuard based tunnels with QUIC based tunnels. These systems run at Cloudflare edge scale and demonstrate that the integration can sustain millions of HTTP/3 requests per second in production.

Cloudflare positions tokio-quiche as a foundation rather than a complete HTTP/3 framework. The library exposes low level protocol capabilities and example client and server event loops, and leaves room for higher level projects to implement opinionated HTTP servers, DNS over QUIC clients, MASQUE based VPNs and other QUIC applications on top. By releasing the crate, Cloudflare aims to lower the barrier for Rust teams to adopt QUIC, HTTP/3 and MASQUE, and to align external integrations with the same transport stack used in its edge services.

InboundPacketRouter that routes UDP datagrams by connection ID and an IoWorker that drives a single quiche Connection per task, keeping transport state isolated and composable.ApplicationOverQuic trait, which abstracts over quiche and I O details so different QUIC based protocols such as HTTP/3, DNS over QUIC or custom protocols can be implemented on top of the same transport core.H3Driver plus ServerH3Driver and ClientH3Driver variants that bridge quiche’s HTTP/3 module to async Rust code, exposing HTTP/3 streams and bodies in a way that fits typical Tokio based services.Check out the . Also, feel free to follow us on and don’t forget to join our and Subscribe to . Wait! are you on telegram?

The post appeared first on .

In this tutorial, we implement an agentic AI pattern using LangGraph that treats reasoning and action as a transactional workflow rather than a single-shot decision. We model a two-phase commit system in which an agent stages reversible changes, validates strict invariants, pauses for human approval via graph interrupts, and commits or rolls back only then. With this, we demonstrate how agentic systems can be designed with safety, auditability, and controllability at their core, moving beyond reactive chat agents toward structured, governance-aware AI workflows that run reliably in Google Colab using OpenAI models. Check out the .

!pip -q install -U langgraph langchain-openai

import os, json, uuid, copy, math, re, operator

from typing import Any, Dict, List, Optional

from typing_extensions import TypedDict, Annotated

from langchain_openai import ChatOpenAI

from langchain_core.messages import SystemMessage, HumanMessage, AIMessage, AnyMessage

from langgraph.graph import StateGraph, START, END

from langgraph.graph.message import add_messages

from langgraph.checkpoint.memory import InMemorySaver

from langgraph.types import interrupt, Command

def _set_env_openai():

if os.environ.get("OPENAI_API_KEY"):

return

try:

from google.colab import userdata

k = userdata.get("OPENAI_API_KEY")

if k:

os.environ["OPENAI_API_KEY"] = k

return

except Exception:

pass

import getpass

os.environ["OPENAI_API_KEY"] = getpass.getpass("Enter OPENAI_API_KEY: ")

_set_env_openai()

MODEL = os.environ.get("OPENAI_MODEL", "gpt-4o-mini")

llm = ChatOpenAI(model=MODEL, temperature=0)We set up the execution environment by installing LangGraph and initializing the OpenAI model. We securely load the API key and configure a deterministic LLM, ensuring that all downstream agent behavior remains reproducible and controlled. Check out the .

SAMPLE_LEDGER = [

{"txn_id": "T001", "name": "Asha", "email": "ASHA@Example.com", "amount": "1,250.50", "date": "12/01/2025", "note": "Membership renewal"},

{"txn_id": "T002", "name": "Ravi", "email": "ravi@example.com", "amount": "-500", "date": "2025-12-02", "note": "Chargeback?"},

{"txn_id": "T003", "name": "Sara", "email": "sara@example.com", "amount": "700", "date": "02-12-2025", "note": "Late fee waived"},

{"txn_id": "T003", "name": "Sara", "email": "sara@example.com", "amount": "700", "date": "02-12-2025", "note": "Duplicate row"},

{"txn_id": "T004", "name": "Lee", "email": "lee@example.com", "amount": "NaN", "date": "2025/12/03", "note": "Bad amount"},

]

ALLOWED_OPS = {"replace", "remove", "add"}

def _parse_amount(x):

if isinstance(x, (int, float)):

return float(x)

if isinstance(x, str):

try:

return float(x.replace(",", ""))

except:

return None

return None

def _iso_date(d):

if not isinstance(d, str):

return None

d = d.replace("/", "-")

p = d.split("-")

if len(p) == 3 and len(p[0]) == 4:

return d

if len(p) == 3 and len(p[2]) == 4:

return f"{p[2]}-{p[1]}-{p[0]}"

return None

def profile_ledger(rows):

seen, anomalies = {}, []

for i, r in enumerate(rows):

if _parse_amount(r.get("amount")) is None:

anomalies.append(i)

if r.get("txn_id") in seen:

anomalies.append(i)

seen[r.get("txn_id")] = i

return {"rows": len(rows), "anomalies": anomalies}

def apply_patch(rows, patch):

out = copy.deepcopy(rows)

for op in sorted([p for p in patch if p["op"] == "remove"], key=lambda x: x["idx"], reverse=True):

out.pop(op["idx"])

for op in patch:

if op["op"] in {"add", "replace"}:

out[op["idx"]][op["field"]] = op["value"]

return out

def validate(rows):

issues = []

for i, r in enumerate(rows):

if _parse_amount(r.get("amount")) is None:

issues.append(i)

if _iso_date(r.get("date")) is None:

issues.append(i)

return {"ok": len(issues) == 0, "issues": issues}We define the core ledger abstraction along with the patching, normalization, and validation logic. We treat data transformations as reversible operations, allowing the agent to reason about changes safely before committing them. Check out the .

class TxnState(TypedDict):

messages: Annotated[List[AnyMessage], add_messages]

raw_rows: List[Dict[str, Any]]

sandbox_rows: List[Dict[str, Any]]

patch: List[Dict[str, Any]]

validation: Dict[str, Any]

approved: Optional[bool]

def node_profile(state):

p = profile_ledger(state["raw_rows"])

return {"messages": [AIMessage(content=json.dumps(p))]}

def node_patch(state):

sys = SystemMessage(content="Return a JSON patch list fixing amounts, dates, emails, duplicates")

usr = HumanMessage(content=json.dumps(state["raw_rows"]))

r = llm.invoke([sys, usr])

patch = json.loads(re.search(r"[.*]", r.content, re.S).group())

return {"patch": patch, "messages": [AIMessage(content=json.dumps(patch))]}

def node_apply(state):

return {"sandbox_rows": apply_patch(state["raw_rows"], state["patch"])}

def node_validate(state):

v = validate(state["sandbox_rows"])

return {"validation": v, "messages": [AIMessage(content=json.dumps(v))]}

def node_approve(state):

decision = interrupt({"validation": state["validation"]})

return {"approved": decision == "approve"}

def node_commit(state):

return {"messages": [AIMessage(content="COMMITTED")]}

def node_rollback(state):

return {"messages": [AIMessage(content="ROLLED BACK")]}We model the agent’s internal state and define each node in the LangGraph workflow. We express agent behavior as discrete, inspectable steps that transform state while preserving message history. Check out the .

builder = StateGraph(TxnState)

builder.add_node("profile", node_profile)

builder.add_node("patch", node_patch)

builder.add_node("apply", node_apply)

builder.add_node("validate", node_validate)

builder.add_node("approve", node_approve)

builder.add_node("commit", node_commit)

builder.add_node("rollback", node_rollback)

builder.add_edge(START, "profile")

builder.add_edge("profile", "patch")

builder.add_edge("patch", "apply")

builder.add_edge("apply", "validate")

builder.add_conditional_edges(

"validate",

lambda s: "approve" if s["validation"]["ok"] else "rollback",

{"approve": "approve", "rollback": "rollback"}

)

builder.add_conditional_edges(

"approve",

lambda s: "commit" if s["approved"] else "rollback",

{"commit": "commit", "rollback": "rollback"}

)

builder.add_edge("commit", END)

builder.add_edge("rollback", END)

app = builder.compile(checkpointer=InMemorySaver())We construct the LangGraph state machine and explicitly encode the control flow between profiling, patching, validation, approval, and finalization. We use conditional edges to enforce governance rules rather than rely on implicit model decisions. Check out the .

def run():

state = {

"messages": [],

"raw_rows": SAMPLE_LEDGER,

"sandbox_rows": [],

"patch": [],

"validation": {},

"approved": None,

}

cfg = {"configurable": {"thread_id": "txn-demo"}}

out = app.invoke(state, config=cfg)

if "__interrupt__" in out:

print(json.dumps(out["__interrupt__"], indent=2))

decision = input("approve / reject: ").strip()

out = app.invoke(Command(resume=decision), config=cfg)

print(out["messages"][-1].content)

run()We run the transactional agent and handle human-in-the-loop approval through graph interrupts. We resume execution deterministically, demonstrating how agentic workflows can pause, accept external input, and safely conclude with either a commit or rollback.

In conclusion, we showed how LangGraph enables us to build agents that reason over states, enforce validation gates, and collaborate with humans at precisely defined control points. We treated the agent not as an oracle, but as a transaction coordinator that can stage, inspect, and reverse its own actions while maintaining a full audit trail. This approach highlights how agentic AI can be applied to real-world systems that require trust, compliance, and recoverability, and it provides a practical foundation for building production-grade autonomous workflows that remain safe, transparent, and human-supervised.

Check out the . Also, feel free to follow us on and don’t forget to join our and Subscribe to . Wait! are you on telegram?

The post appeared first on .

Dating apps and AI companies have been touting bot wingmen for months. But the future might just be good old-fashioned meet-cutes.

Tencent Hunyuan’s 3D Digital Human team has released HY-Motion 1.0, an open weight text-to-3D human motion generation family that scales Diffusion Transformer based Flow Matching to 1B parameters in the motion domain. The models turn natural language prompts plus an expected duration into 3D human motion clips on a unified SMPL-H skeleton and are available on GitHub and Hugging Face with code, checkpoints and a Gradio interface for local use.

HY-Motion 1.0 is a series of text-to-3D human motion generation models built on a Diffusion Transformer, DiT, trained with a Flow Matching objective. The model series showcases 2 variants, HY-Motion-1.0 with 1.0B parameters as the standard model and HY-Motion-1.0-Lite with 0.46B parameters as a lightweight option.

Both models generate skeleton based 3D character animations from simple text prompts. The output is a motion sequence on an SMPL-H skeleton that can be integrated into 3D animation or game pipelines, for example for digital humans, cinematics and interactive characters. The release includes inference scripts, a batch oriented CLI and a Gradio web app, and supports macOS, Windows and Linux.

The training data comes from 3 sources, in the wild human motion videos, motion capture data and 3D animation assets for game production. The research team starts from 12M high quality video clips from HunyuanVideo, runs shot boundary detection to split scenes and a human detector to keep clips with people, then applies the GVHMR algorithm to reconstruct SMPL X motion tracks. Motion capture sessions and 3D animation libraries contribute about 500 hours of additional motion sequences.

All data is retargeted onto a unified SMPL-H skeleton through mesh fitting and retargeting tools. A multi stage filter removes duplicate clips, abnormal poses, outliers in joint velocity, anomalous displacements, long static segments and artifacts such as foot sliding. Motions are then canonicalized, resampled to 30 fps and segmented into clips shorter than 12 seconds with a fixed world frame, Y axis up and the character facing the positive Z axis. The final corpus contains over 3,000 hours of motion, of which 400 hours are high quality 3D motion with verified captions.

On top of this, the research team defines a 3 level taxonomy. At the top level there are 6 classes, Locomotion, Sports and Athletics, Fitness and Outdoor Activities, Daily Activities, Social Interactions and Leisure and Game Character Actions. These expand into more than 200 fine grained motion categories at the leaves, which cover both simple atomic actions and concurrent or sequential motion combinations.

HY-Motion 1.0 uses the SMPL-H skeleton with 22 body joints without hands. Each frame is a 201 dimensional vector that concatenates global root translation in 3D space, global body orientation in a continuous 6D rotation representation, 21 local joint rotations in 6D form and 22 local joint positions in 3D coordinates. Velocities and foot contact labels are removed because they slowed training and did not help final quality. This representation is compatible with animation workflows and close to the DART model representation.

The core network is a hybrid HY Motion DiT. It first applies dual stream blocks that process motion latents and text tokens separately. In these blocks, each modality has its own QKV projections and MLP, and a joint attention module allows motion tokens to query semantic features from text tokens while keeping modality specific structure. The network then switches to single stream blocks that concatenate motion and text tokens into one sequence and process them with parallel spatial and channel attention modules to perform deeper multimodal fusion.

For text conditioning, the system uses a dual encoder scheme. Qwen3 8B provides token level embeddings, while a CLIP-L model provides global text features. A Bidirectional Token Refiner fixes the causal attention bias of the LLM for non autoregressive generation. These signals feed the DiT through adaptive layer normalization conditioning. Attention is asymmetric, motion tokens can attend to all text tokens, but text tokens do not attend back to motion, which prevents noisy motion states from corrupting the language representation. Temporal attention inside the motion branch uses a narrow sliding window of 121 frames, which focuses capacity on local kinematics while keeping cost manageable for long clips. Full Rotary Position Embedding is applied after concatenating text and motion tokens to encode relative positions across the whole sequence.

HY-Motion 1.0 uses Flow Matching instead of standard denoising diffusion. The model learns a velocity field along a continuous path that interpolates between Gaussian noise and real motion data. During training, the objective is a mean squared error between predicted and ground truth velocities along this path. During inference, the learned ordinary differential equation is integrated from noise to a clean trajectory, which gives stable training for long sequences and fits the DiT architecture.

A separate Duration Prediction and Prompt Rewrite module improves instruction following. It uses Qwen3 30B A3B as the base model and is trained on synthetic user style prompts generated from motion captions with a VLM and LLM pipeline, for example Gemini 2.5 Pro. This module predicts a suitable motion duration and rewrites informal prompts into normalized text that is easier for the DiT to follow. It is trained first with supervised fine tuning and then refined with Group Relative Policy Optimization, using Qwen3 235B A22B as a reward model that scores semantic consistency and duration plausibility.

Training follows a 3 stage curriculum. Stage 1 performs large scale pretraining on the full 3,000 hour dataset to learn a broad motion prior and basic text motion alignment. Stage 2 fine tunes on the 400 hour high quality set to sharpen motion detail and improve semantic correctness with a smaller learning rate. Stage 3 applies reinforcement learning, first Direct Preference Optimization using 9,228 curated human preference pairs sampled from about 40,000 generated pairs, then Flow GRPO with a composite reward. The reward combines a semantic score from a Text Motion Retrieval model and a physics score that penalizes artifacts like foot sliding and root drift, under a KL regularization term to stay close to the supervised model.

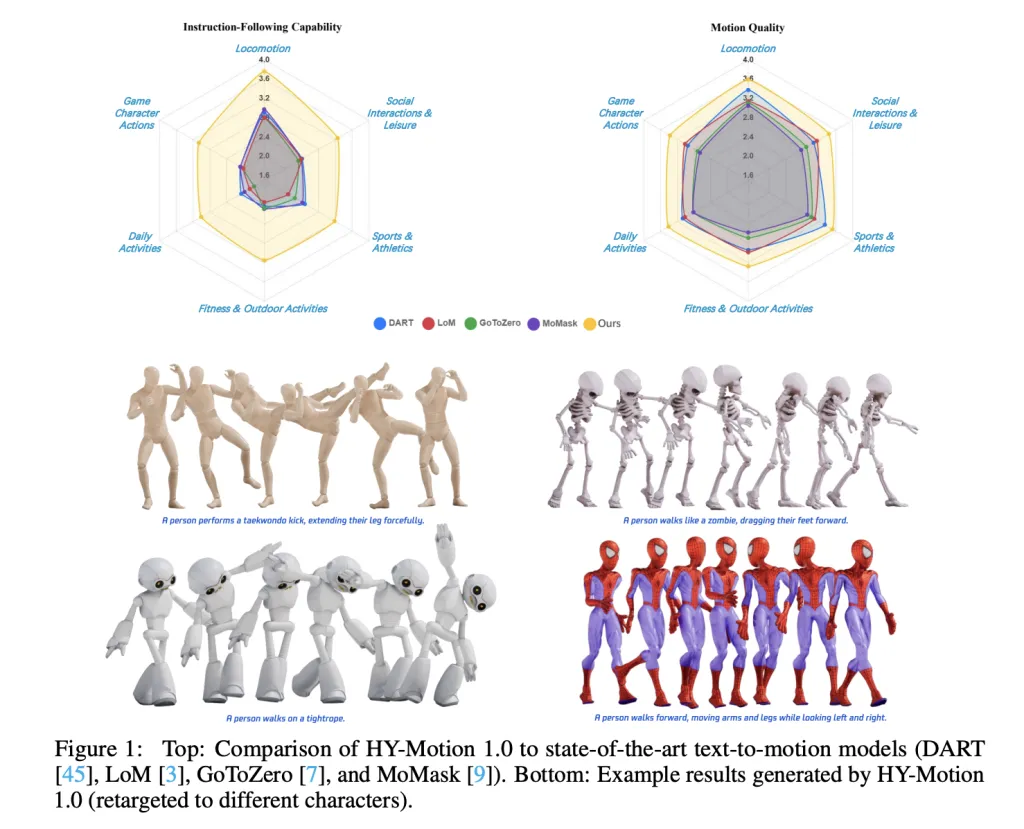

For evaluation, the team builds a test set of over 2,000 prompts that span the 6 taxonomy categories and include simple, concurrent and sequential actions. Human raters score instruction following and motion quality on a scale from 1 to 5. HY-Motion 1.0 reaches an average instruction following score of 3.24 and an SSAE score of 78.6 percent. Baseline text-to-motion systems such as DART, LoM, GoToZero and MoMask achieve scores between 2.17 and 2.31 with SSAE between 42.7 percent and 58.0 percent. For motion quality, HY-Motion 1.0 reaches 3.43 on average versus 3.11 for the best baseline.

Scaling experiments study DiT models with 0.05B, 0.46B, 0.46B trained only on 400 hours and 1B parameters. Instruction following improves steadily with model size, with the 1B model reaching an average of 3.34. Motion quality saturates around the 0.46B scale, where the 0.46B and 1B models reach similar averages between 3.26 and 3.34. Comparison of the 0.46B model trained on 3,000 hours and the 0.46B model trained only on 400 hours shows that larger data volume is key for instruction alignment, while high quality curation mainly improves realism.

Check out the and Also, feel free to follow us on and don’t forget to join our and Subscribe to . Wait! are you on telegram?

The post appeared first on .

In this tutorial, we demonstrate how we simulate a privacy-preserving fraud detection system using Federated Learning without relying on heavyweight frameworks or complex infrastructure. We build a clean, CPU-friendly setup that mimics ten independent banks, each training a local fraud-detection model on its own highly imbalanced transaction data. We coordinate these local updates through a simple FedAvg aggregation loop, allowing us to improve a global model while ensuring that no raw transaction data ever leaves a client. Alongside this, we integrate OpenAI to support post-training analysis and risk-oriented reporting, demonstrating how federated learning outputs can be translated into decision-ready insights. Check out the .

!pip -q install torch scikit-learn numpy openai

import time, random, json, os, getpass

import numpy as np

import torch

import torch.nn as nn

from torch.utils.data import DataLoader, TensorDataset

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import roc_auc_score, average_precision_score, accuracy_score

from openai import OpenAI

SEED = 7

random.seed(SEED); np.random.seed(SEED); torch.manual_seed(SEED)

DEVICE = torch.device("cpu")

print("Device:", DEVICE)We set up the execution environment and import all required libraries for data generation, modeling, evaluation, and reporting. We also fix random seeds and the device configuration to ensure our federated simulation remains deterministic and reproducible on CPU. Check out the .

X, y = make_classification(

n_samples=60000,

n_features=30,

n_informative=18,

n_redundant=8,

weights=[0.985, 0.015],

class_sep=1.5,

flip_y=0.01,

random_state=SEED

)

X = X.astype(np.float32)

y = y.astype(np.int64)

X_train_full, X_test, y_train_full, y_test = train_test_split(

X, y, test_size=0.2, stratify=y, random_state=SEED

)

server_scaler = StandardScaler()

X_train_full_s = server_scaler.fit_transform(X_train_full).astype(np.float32)

X_test_s = server_scaler.transform(X_test).astype(np.float32)

test_loader = DataLoader(

TensorDataset(torch.from_numpy(X_test_s), torch.from_numpy(y_test)),

batch_size=1024,

shuffle=False

)

We generate a highly imbalanced, credit-card-like fraud dataset & split it into training & test sets. We standardize the server-side data and prepare a global test loader that allows us to consistently evaluate the aggregated model after each federated round. Check out the .

def dirichlet_partition(y, n_clients=10, alpha=0.35):

classes = np.unique(y)

idx_by_class = [np.where(y == c)[0] for c in classes]

client_idxs = [[] for _ in range(n_clients)]

for idxs in idx_by_class:

np.random.shuffle(idxs)

props = np.random.dirichlet(alpha * np.ones(n_clients))

cuts = (np.cumsum(props) * len(idxs)).astype(int)

prev = 0

for cid, cut in enumerate(cuts):

client_idxs[cid].extend(idxs[prev:cut].tolist())

prev = cut

return [np.array(ci, dtype=np.int64) for ci in client_idxs]

NUM_CLIENTS = 10

client_idxs = dirichlet_partition(y_train_full, NUM_CLIENTS, 0.35)

def make_client_split(X, y, idxs):

Xi, yi = X[idxs], y[idxs]

if len(np.unique(yi)) < 2:

other = np.where(y == (1 - yi[0]))[0]

add = np.random.choice(other, size=min(10, len(other)), replace=False)

Xi = np.concatenate([Xi, X[add]])

yi = np.concatenate([yi, y[add]])

return train_test_split(Xi, yi, test_size=0.15, stratify=yi, random_state=SEED)

client_data = [make_client_split(X_train_full, y_train_full, client_idxs[c]) for c in range(NUM_CLIENTS)]

def make_client_loaders(Xtr, ytr, Xva, yva):

sc = StandardScaler()

Xtr_s = sc.fit_transform(Xtr).astype(np.float32)

Xva_s = sc.transform(Xva).astype(np.float32)

tr = DataLoader(TensorDataset(torch.from_numpy(Xtr_s), torch.from_numpy(ytr)), batch_size=512, shuffle=True)

va = DataLoader(TensorDataset(torch.from_numpy(Xva_s), torch.from_numpy(yva)), batch_size=512)

return tr, va

client_loaders = [make_client_loaders(*cd) for cd in client_data]We simulate realistic non-IID behavior by partitioning the training data across ten clients using a Dirichlet distribution. We then create independent client-level train and validation loaders, ensuring that each simulated bank operates on its own locally scaled data. Check out the .

class FraudNet(nn.Module):

def __init__(self, in_dim):

super().__init__()

self.net = nn.Sequential(

nn.Linear(in_dim, 64),

nn.ReLU(),

nn.Dropout(0.1),

nn.Linear(64, 32),

nn.ReLU(),

nn.Dropout(0.1),

nn.Linear(32, 1)

)

def forward(self, x):

return self.net(x).squeeze(-1)

def get_weights(model):

return [p.detach().cpu().numpy() for p in model.state_dict().values()]

def set_weights(model, weights):

keys = list(model.state_dict().keys())

model.load_state_dict({k: torch.tensor(w) for k, w in zip(keys, weights)}, strict=True)

@torch.no_grad()

def evaluate(model, loader):

model.eval()

bce = nn.BCEWithLogitsLoss()

ys, ps, losses = [], [], []

for xb, yb in loader:

logits = model(xb)

losses.append(bce(logits, yb.float()).item())

ys.append(yb.numpy())

ps.append(torch.sigmoid(logits).numpy())

y_true = np.concatenate(ys)

y_prob = np.concatenate(ps)

return {

"loss": float(np.mean(losses)),

"auc": roc_auc_score(y_true, y_prob),

"ap": average_precision_score(y_true, y_prob),

"acc": accuracy_score(y_true, (y_prob >= 0.5).astype(int))

}

def train_local(model, loader, lr):

opt = torch.optim.Adam(model.parameters(), lr=lr)

bce = nn.BCEWithLogitsLoss()

model.train()

for xb, yb in loader:

opt.zero_grad()

loss = bce(model(xb), yb.float())

loss.backward()

opt.step()We define the neural network used for fraud detection along with utility functions for training, evaluation, and weight exchange. We implement lightweight local optimization and metric computation to keep client-side updates efficient and easy to reason about. Check out the .

def fedavg(weights, sizes):

total = sum(sizes)

return [

sum(w[i] * (s / total) for w, s in zip(weights, sizes))

for i in range(len(weights[0]))

]

ROUNDS = 10

LR = 5e-4

global_model = FraudNet(X_train_full.shape[1])

global_weights = get_weights(global_model)

for r in range(1, ROUNDS + 1):

client_weights, client_sizes = [], []

for cid in range(NUM_CLIENTS):

local = FraudNet(X_train_full.shape[1])

set_weights(local, global_weights)

train_local(local, client_loaders[cid][0], LR)

client_weights.append(get_weights(local))

client_sizes.append(len(client_loaders[cid][0].dataset))

global_weights = fedavg(client_weights, client_sizes)

set_weights(global_model, global_weights)

metrics = evaluate(global_model, test_loader)

print(f"Round {r}: {metrics}")We orchestrate the federated learning process by iteratively training local client models and aggregating their parameters using FedAvg. We evaluate the global model after each round to monitor convergence and understand how collective learning improves fraud detection performance. Check out the .

OPENAI_API_KEY = getpass.getpass("Enter OPENAI_API_KEY (input hidden): ").strip()

if OPENAI_API_KEY:

os.environ["OPENAI_API_KEY"] = OPENAI_API_KEY

client = OpenAI()

summary = {

"rounds": ROUNDS,

"num_clients": NUM_CLIENTS,

"final_metrics": metrics,

"client_sizes": [len(client_loaders[c][0].dataset) for c in range(NUM_CLIENTS)],

"client_fraud_rates": [float(client_data[c][1].mean()) for c in range(NUM_CLIENTS)]

}

prompt = (

"Write a concise internal fraud-risk report.n"

"Include executive summary, metric interpretation, risks, and next steps.nn"

+ json.dumps(summary, indent=2)

)

resp = client.responses.create(model="gpt-5.2", input=prompt)

print(resp.output_text)We transform the technical results into a concise analytical report using an external language model. We securely accept the API key via keyboard input and generate decision-oriented insights that summarize performance, risks, and recommended next steps.

In conclusion, we showed how to implement federated learning from first principles in a Colab notebook while remaining stable, interpretable, and realistic. We observed how extreme data heterogeneity across clients influences convergence and why careful aggregation and evaluation are critical in fraud-detection settings. We also extended the workflow by generating an automated risk-team report, demonstrating how analytical results can be translated into decision-ready insights. At last, we presented a practical blueprint for experimenting with federated fraud models that emphasizes privacy awareness, simplicity, and real-world relevance.

Check out the . Also, feel free to follow us on and don’t forget to join our and Subscribe to . Wait! are you on telegram?

The post appeared first on .

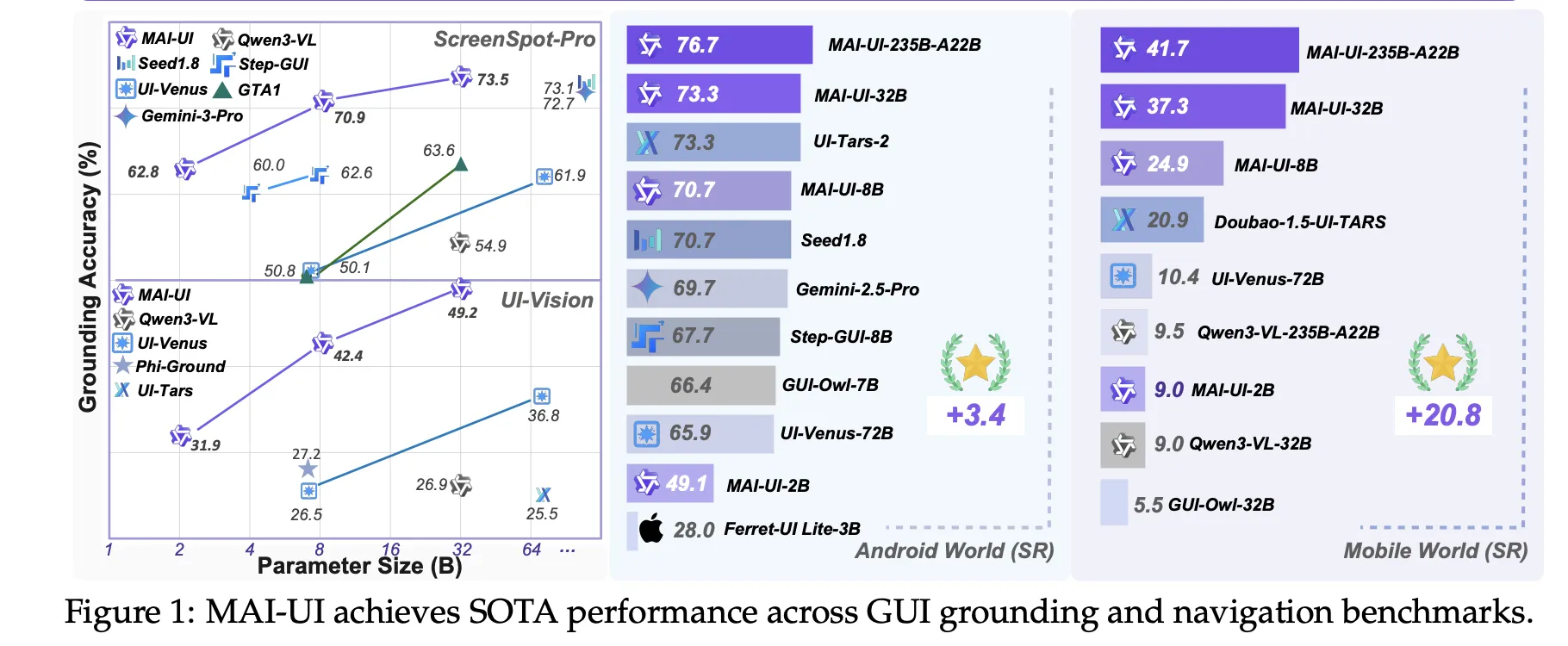

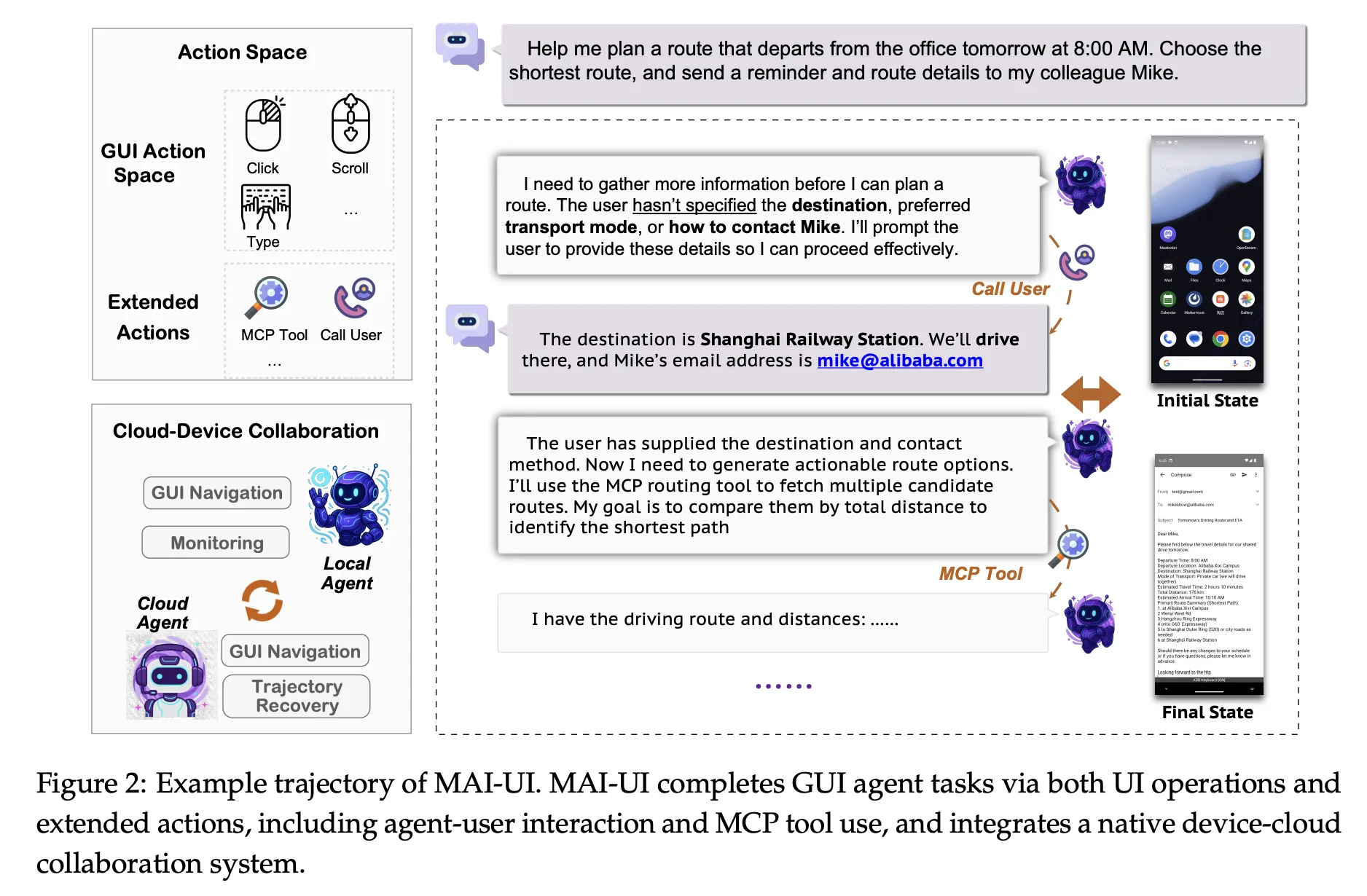

Alibaba Tongyi Lab have released MAI-UI—a family of foundation GUI agents. It natively integrates MCP tool use, agent user interaction, device–cloud collaboration, and online RL, establishing state-of-the-art results in general GUI grounding and mobile GUI navigation, surpassing Gemini-2.5-Pro, Seed1.8, and UI-Tars-2 on AndroidWorld. The system targets three specific gaps that early GUI agents often ignore, native agent user interaction, MCP tool integration, and a device cloud collaboration architecture that keeps privacy sensitive work on device while still using large cloud models when needed.

MAI-UI is a family of multimodal GUI agents built on Qwen3 VL, with model sizes 2B, 8B, 32B and 235B A22B. These models take natural language instructions and rendered UI screenshots as input, then output structured actions for a live Android environment.

The action space covers standard operations such as clicking elements, swiping, entering text and pressing system buttons. On top of that, MAI-UI introduces explicit actions for answering user questions, asking the user for clarification when the goal is ambiguous, and invoking external tools through MCP tool calls. This makes the agent capable of mixing GUI steps, direct language responses and API level operations in a single trajectory.

From a modeling perspective, MAI UI unifies three components, a self evolving navigation data pipeline that includes user interaction and MCP cases, an online RL framework that scales to hundreds of parallel Android instances and long contexts, and a native device cloud collaboration system that routes execution based on task state and privacy constraints.

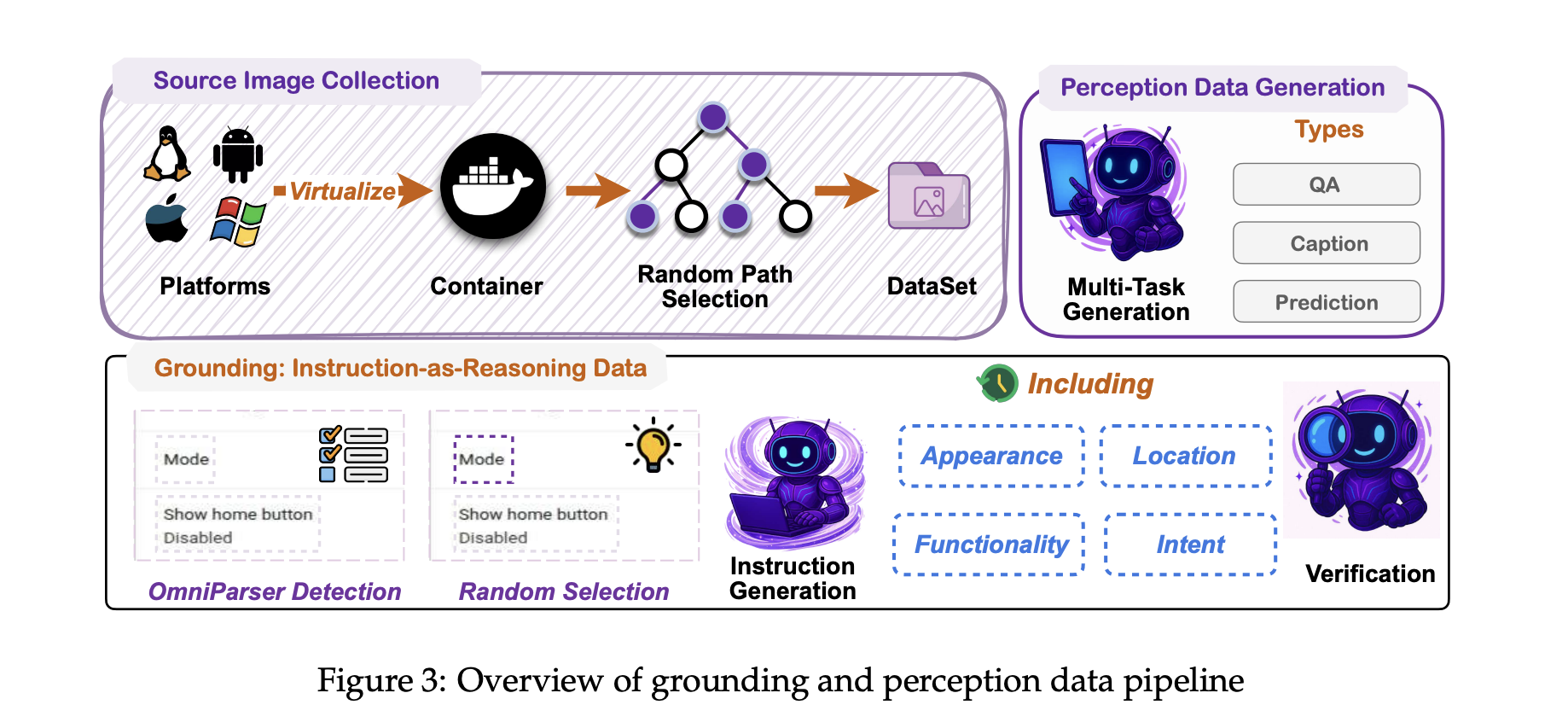

A core requirement for any GUI agent is grounding, mapping free form language like ‘open monthly billing settings’ to the correct on screen control. MAI-UI adopts a UI grounding strategy inspired by the earlier UI-Ins work on multi perspective instruction descriptions.

For each UI element, the training pipeline does not rely on a single caption. Instead, it generates several views of the same element, for example appearance, function, spatial location and user intent. These multiple instructions are treated as reasoning evidence for the model, which must select a point inside the correct bounding box. This reduces the impact of flawed or underspecified instructions, an issue that UI Ins quantified in existing datasets.

Ground truth boxes are collected from a mix of curated GUI datasets and large scale exploration of virtualized operating systems in containerized environments. Accessibility trees or OCR based parsers are used to align textual metadata with pixel locations. The training objective combines supervised fine tuning with a simple reinforcement signal that rewards correct point in box predictions and valid output format.

On public GUI grounding benchmarks, the resulting MAI-UI models reach 73.5 percent accuracy on ScreenSpot Pro with adaptive zoom in, 91.3 percent on MMBench GUI L2, 70.9 percent on OSWorld G and 49.2 percent on UI Vision. These numbers surpass Gemini 3 Pro and Seed1.8 on ScreenSpot Pro, and significantly outperform earlier open models on UI Vision.

Navigation is harder than grounding because the agent must maintain context across many steps, possibly across applications, while interacting with the user and tools. To build robust navigation behavior, Tongyi Lab uses a self evolving data pipeline.

Seed tasks come from app manuals, hand designed scenarios and filtered public data. Parameters such as dates, limits and filter values are perturbed to expand coverage, and object level substitutions are applied while staying within the same use case. Multiple agents, together with human annotators, execute these tasks in Android environments to produce trajectories. A judge model then evaluates these trajectories, keeps the longest correct prefixes and filters out low quality segments. The next supervised training round uses the union of fresh human traces and high quality model rollouts, so the data distribution gradually follows the current policy.

MAI UI is evaluated on MobileWorld, a benchmark from the same team that includes 201 tasks across 20 applications. MobileWorld explicitly mixes three categories, pure GUI tasks, agent user interaction tasks that require natural language back and forth with the user, and MCP augmented tasks that require tool calls.

On MobileWorld, MAI UI reaches 41.7 percent overall success, a gain of about 20.8 points over the strongest end to end GUI baselines, and competitive with agentic frameworks that use larger proprietary planners such as Gemini 3 Pro.

Static data is not enough for robustness in dynamic mobile apps. MAI-UI therefore uses an online RL framework where the agent interacts directly with containerized Android Virtual Devices. The environment stack packs rooted AVD images and backend services into Docker containers, exposes standard reset and step operations over a service layer and supports more than 35 self hosted apps from e commerce, social, productivity and enterprise categories.

The RL setup uses an asynchronous on policy method, GRPO, implemented on top of verl. It combines tensor, pipeline and context parallelism, similar to Megatron style training, so that the model can learn from trajectories with up to 50 steps and very long token sequences. Rewards come from rule based verifiers or model judges that detect task completion, along with penalties for obvious looping behaviors. Only recent successful trajectories are kept in task specific buffers to stabilize learning.

Scaling this RL environment matters in practice. The research team shows that increasing the number of parallel GUI environments from 32 to 512 yields about 5.2 percentage points improvement on navigation success, and increasing the allowed environment steps from 15 to 50 adds about 4.3 points.

On the AndroidWorld benchmark, which evaluates online navigation in a standard Android app suite, the largest MAI UI variant reaches 76.7 percent success, surpassing UI-Tars-2, Gemini 2.5 Pro and Seed1.8.

Check out the and . Also, feel free to follow us on and don’t forget to join our and Subscribe to . Wait! are you on telegram?

The post appeared first on .

US support for nuclear energy is soaring. Meanwhile, coal plants are on their way out and electricity-sucking data centers are meeting huge pushback. Welcome to the next front in the energy battle.

LLMRouter is an open source routing library from the U Lab at the University of Illinois Urbana Champaign that treats model selection as a first class system problem. It sits between applications and a pool of LLMs and chooses a model for each query based on task complexity, quality targets, and cost, all exposed through a unified Python API and CLI. The project ships with more than 16 routing models, a data generation pipeline over 11 benchmarks, and a plugin system for custom routers.

LLMRouter organizes routing algorithms into four families, Single-Round Routers, Multi-Round Routers, Personalized Routers, and Agentic Routers. Single round routers include knnrouter, svmrouter, mlprouter, mfrouter, elorouter, routerdc, automix, hybrid_llm, graphrouter, causallm_router, and the baselines smallest_llm and largest_llm. These models implement strategies such as k nearest neighbors, support vector machines, multilayer perceptrons, matrix factorization, Elo rating, dual contrastive learning, automatic model mixing, and graph based routing.

Multi round routing is exposed through router_r1, a pre trained instance of Router R1 integrated into LLMRouter. Router R1 formulates multi LLM routing and aggregation as a sequential decision process where the router itself is an LLM that alternates between internal reasoning steps and external model calls. It is trained with reinforcement learning using a rule based reward that balances format, outcome, and cost. In LLMRouter, router_r1 is available as an extra installation target with pinned dependencies tested on vllm==0.6.3 and torch==2.4.0.

Personalized routing is handled by gmtrouter, described as a graph based personalized router with user preference learning. GMTRouter represents multi turn user LLM interactions as a heterogeneous graph over users, queries, responses, and models. It runs a message passing architecture over this graph to infer user specific routing preferences from few shot interaction data, and experiments show accuracy and AUC gains over non personalized baselines.

Agentic routers in LLMRouter extend routing to multi step reasoning workflows. knnmultiroundrouter uses k nearest neighbor reasoning over multi turn traces and is intended for complex tasks. llmmultiroundrouter exposes an LLM based agentic router that performs multi step routing without its own training loop. These agentic routers share the same configuration and data formats as the other router families and can be swapped through a single CLI flag.

LLMRouter ships with a full data generation pipeline that turns standard benchmarks and LLM outputs into routing datasets. The pipeline supports 11 benchmarks, Natural QA, Trivia QA, MMLU, GPQA, MBPP, HumanEval, GSM8K, CommonsenseQA, MATH, OpenBookQA, and ARC Challenge. It runs in three explicit stages. First, data_generation.py extracts queries and ground truth labels and creates train and test JSONL splits. Second, generate_llm_embeddings.py builds embeddings for candidate LLMs from metadata. Third, api_calling_evaluation.py calls LLM APIs, evaluates responses, and fuses scores with embeddings into routing records. ()

The pipeline outputs query files, LLM embedding JSON, query embedding tensors, and routing data JSONL files. A routing entry includes fields such as task_name, query, ground_truth, metric, model_name, response, performance, embedding_id, and token_num. Configuration is handled entirely through YAML, so engineers point the scripts to new datasets and candidate model lists without modifying code.

For interactive use, llmrouter chat launches a Gradio based chat frontend over any router and configuration. The server can bind to a custom host and port and can expose a public sharing link. Query modes control how routing sees context. current_only uses only the latest user message, full_context concatenates the dialogue history, and retrieval augments the query with the top k similar historical queries. The UI visualizes model choices in real time and is driven by the same router configuration used for batch inference.

LLMRouter also provides a plugin system for custom routers. New routers live under custom_routers, subclass MetaRouter, and implement route_single and route_batch. Configuration files under that directory define data paths, hyperparameters, and optional default API endpoints. Plugin discovery scans the project custom_routers folder, a ~/.llmrouter/plugins directory, and any extra paths in the LLMROUTER_PLUGINS environment variable. Example custom routers include randomrouter, which selects a model at random, and thresholdrouter, which is a trainable router that estimates query difficulty.

knnrouter, graphrouter, routerdc, router_r1, and gmtrouter, all exposed through a unified config and CLI. router_r1 integrates the Router R1 framework, where an LLM router interleaves internal “think” steps with external “route” calls and is trained with a rule based reward that combines format, outcome, and cost to optimize performance cost trade offs.gmtrouter models users, queries, responses and LLMs as nodes in a heterogeneous graph and uses message passing to learn user specific routing preferences from few shot histories, achieving up to around 21% accuracy gains and substantial AUC improvements over strong baselines. MetaRouter that allows teams to register custom routers while reusing the same routing datasets and infrastructure.Check out the and . Also, feel free to follow us on and don’t forget to join our and Subscribe to . Wait! are you on telegram?

The post appeared first on .

In this tutorial, we build an advanced, end-to-end multi-agent research workflow using the framework. We design a coordinated society of agents, Planner, Researcher, Writer, Critic, and Finalizer, that collaboratively transform a high-level topic into a polished, evidence-grounded research brief. We securely integrate the OpenAI API, orchestrate agent interactions programmatically, and add lightweight persistent memory to retain knowledge across runs. By structuring the system around clear roles, JSON-based contracts, and iterative refinement, we demonstrate how CAMEL can be used to construct reliable, controllable, and scalable agentic pipelines. Check out the .

!pip -q install "camel-ai[all]" "python-dotenv" "rich"

import os

import json

import time

from typing import Dict, Any

from rich import print as rprint

def load_openai_key() -> str:

key = None

try:

from google.colab import userdata

key = userdata.get("OPENAI_API_KEY")

except Exception:

key = None

if not key:

import getpass

key = getpass.getpass("Enter OPENAI_API_KEY (hidden): ").strip()

if not key:

raise ValueError("OPENAI_API_KEY is required.")

return key

os.environ["OPENAI_API_KEY"] = load_openai_key()We set up the execution environment and securely load the OpenAI API key using Colab secrets or a hidden prompt. We ensure the runtime is ready by installing dependencies and configuring authentication so the workflow can run safely without exposing credentials. Check out the .

from camel.models import ModelFactory

from camel.types import ModelPlatformType, ModelType

from camel.agents import ChatAgent

from camel.toolkits import SearchToolkit

MODEL_CFG = {"temperature": 0.2}

model = ModelFactory.create(

model_platform=ModelPlatformType.OPENAI,

model_type=ModelType.GPT_4O,

model_config_dict=MODEL_CFG,

)

We initialize the CAMEL model configuration and create a shared language model instance using the ModelFactory abstraction. We standardize model behavior across all agents to ensure consistent, reproducible reasoning throughout the multi-agent pipeline. Check out the .

MEM_PATH = "camel_memory.json"

def mem_load() -> Dict[str, Any]:

if not os.path.exists(MEM_PATH):

return {"runs": []}

with open(MEM_PATH, "r", encoding="utf-8") as f:

return json.load(f)

def mem_save(mem: Dict[str, Any]) -> None:

with open(MEM_PATH, "w", encoding="utf-8") as f:

json.dump(mem, f, ensure_ascii=False, indent=2)

def mem_add_run(topic: str, artifacts: Dict[str, str]) -> None:

mem = mem_load()

mem["runs"].append({"ts": int(time.time()), "topic": topic, "artifacts": artifacts})

mem_save(mem)

def mem_last_summaries(n: int = 3) -> str:

mem = mem_load()

runs = mem.get("runs", [])[-n:]

if not runs:

return "No past runs."

return "n".join([f"{i+1}. topic={r['topic']} | ts={r['ts']}" for i, r in enumerate(runs)])We implement a lightweight persistent memory layer backed by a JSON file. We store artifacts from each run and retrieve summaries of previous executions, allowing us to introduce continuity and historical context across sessions. Check out the .

def make_agent(role: str, goal: str, extra_rules: str = "") -> ChatAgent:

system = (

f"You are {role}.n"

f"Goal: {goal}n"

f"{extra_rules}n"

"Output must be crisp, structured, and directly usable by the next agent."

)

return ChatAgent(model=model, system_message=system)

planner = make_agent(

"Planner",

"Create a compact plan and research questions with acceptance criteria.",

"Return JSON with keys: plan, questions, acceptance_criteria."

)

researcher = make_agent(

"Researcher",

"Answer questions using web search results.",

"Return JSON with keys: findings, sources, open_questions."

)

writer = make_agent(

"Writer",

"Draft a structured research brief.",

"Return Markdown only."

)

critic = make_agent(

"Critic",

"Identify weaknesses and suggest fixes.",

"Return JSON with keys: issues, fixes, rewrite_instructions."

)

finalizer = make_agent(

"Finalizer",

"Produce the final improved brief.",

"Return Markdown only."

)

search_tool = SearchToolkit().search_duckduckgo

researcher = ChatAgent(

model=model,

system_message=researcher.system_message,

tools=[search_tool],

)

We define the core agent roles and their responsibilities within the workflow. We construct specialized agents with clear goals and output contracts, and we enhance the Researcher by attaching a web search tool for evidence-grounded responses. Check out the .

def step_json(agent: ChatAgent, prompt: str) -> Dict[str, Any]:

res = agent.step(prompt)

txt = res.msgs[0].content.strip()

try:

return json.loads(txt)

except Exception:

return {"raw": txt}

def step_text(agent: ChatAgent, prompt: str) -> str:

res = agent.step(prompt)

return res.msgs[0].contentWe abstract interaction patterns with agents into helper functions that enforce structured JSON or free-text outputs. We simplify orchestration by handling parsing and fallback logic centrally, making the pipeline more robust to formatting variability. Check out the .

def run_workflow(topic: str) -> Dict[str, str]:

rprint(mem_last_summaries(3))

plan = step_json(

planner,

f"Topic: {topic}nCreate a tight plan and research questions."

)

research = step_json(

researcher,

f"Research the topic using web search.n{json.dumps(plan)}"

)

draft = step_text(

writer,

f"Write a research brief using:n{json.dumps(research)}"

)

critique = step_json(

critic,

f"Critique the draft:n{draft}"

)

final = step_text(

finalizer,

f"Rewrite using critique:n{json.dumps(critique)}nDraft:n{draft}"

)

artifacts = {

"plan_json": json.dumps(plan, indent=2),

"research_json": json.dumps(research, indent=2),

"draft_md": draft,

"critique_json": json.dumps(critique, indent=2),

"final_md": final,

}

mem_add_run(topic, artifacts)

return artifacts

TOPIC = "Agentic multi-agent research workflow with quality control"

artifacts = run_workflow(TOPIC)

print(artifacts["final_md"])We orchestrate the complete multi-agent workflow from planning to finalization. We sequentially pass artifacts between agents, apply critique-driven refinement, persist results to memory, and produce a finalized research brief ready for downstream use.

In conclusion, we implemented a practical CAMEL-based multi-agent system that mirrors real-world research and review workflows. We showed how clearly defined agent roles, tool-augmented reasoning, and critique-driven refinement lead to higher-quality outputs while reducing hallucinations and structural weaknesses. We also established a foundation for extensibility by persisting artifacts and enabling reuse across sessions. This approach allows us to move beyond single-prompt interactions and toward robust agentic systems that can be adapted for research, analysis, reporting, and decision-support tasks at scale.

Check out the . Also, feel free to follow us on and don’t forget to join our and Subscribe to . Wait! are you on telegram?

The post appeared first on .